Test Driven Development: Looking Past the Branding Problem

Examining the benefits and criticisms of Test-Driven Development

Test Driven Development has always suffered a branding problem because it is often seen as a testing approach rather than a way to develop software. The resulting tests happen to be a byproduct of the development process, not an activity undertaken on their own. In this post I'll discuss some shortcomings of linear development and outline key benefits of TDD such as minimalism, waste reduction, implications on module design and its close tie-in to continuous delivery. I will also offer a criticism of TDD. I'll end by offering two activities which people interested in TDD can try to get started.

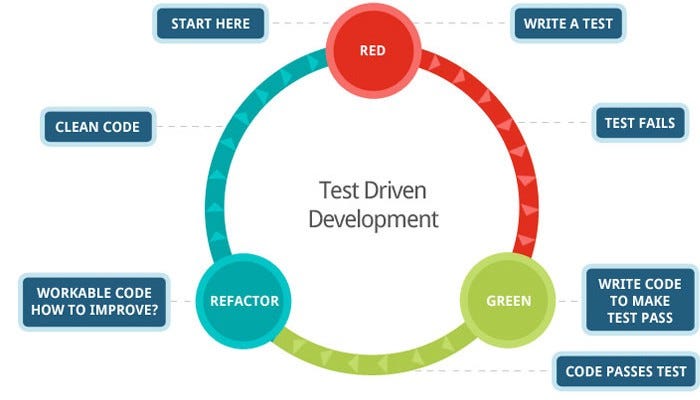

The idea behind the TDD's Red-Green-Refactor cycle is straightforward: you write a test first which will fail because there's no code to test (test is red); you make the test pass by writing just enough code to pass the test (test is green); you refactor the code while keeping the test green. This process is frequently described in the below diagram:

In essence, a bug is injected in the first step and fixed in the next. As you might have introduced some technical debt while focusing exclusively on making a test pass, time is dedicated in the Refactor phase to have clean code. Rinse and repeat.

This doesn't seem intuitive as we're used to taking a requirement, implementing the associated feature, and then writing the tests to make sure the feature works. This linear and logical method of development has two major shortcomings.

Shortcomings of Code-First Development

First, in this process there is no built-in mechanism to ensure that all the code is tested. Checking code coverage is not the solution as high code does not guarantee high quality in test coverage. A developer has to "manually" ensure that they've covered all the different code paths after they've written code, often large amounts of it. For software that follows this linear development approach of design-code-test, this testing gap can be visually described. In the below picture, the code outside the box is untested.

Second, the developer ends up creating design elements that are not needed (also reflected in the above picture). Since assumptions about the design were made at the start, we invariably end up not using part of the design resulting in bloat (as an example, think about all the stuff written "just in case").

The linear development approach does not provide an inherent built-in check for minimalism which ensures we're only writing what we need. This is true to the XP principle of YAGNI which states that a programmer should not add functionality until deemed necessary. Adhering to YAGNI without TDD is difficult.

TDD in its Red-Green-Refactor cycle forces you to write only the code you absolutely need while also ensuring that it is well-tested.

TDD Reducing Waste

So far we have only touched upon testing gaps between our design, code and tests. This is only part of the TDD story. Its real value is realized only when we view it in the context of how much time a team spends on identifying and fixing defects. The physics of TDD article by James Grenning could easily have been called the economics of TDD.

The idea is explained in a comparison of how bugs are introduced, discovered, root cause identified, and fixed in a linear development approach, versus how the same happens in a TDD cycle. Below are both approaches (taken from the article linked).

Linear development

TDD

The main difference between the two approaches is that when using TDD the developer is consciously introducing a defect rather than inadvertently. The knowledge of a defect existing (i.e., a failing test) focuses the developer on fixing it immediately. In essence, bug injection and bug discovery become the same thing. This is in stark contrast to linear development where bug injection is inadvertent and bug discovery is deferred to much later, which leads to a higher chance of it being discovered by the customer.

The duration of time spent between bug injection and discovery is proportional to the time between bug discovery and the root cause being identified. In TDD, both are minimized to reduce the amount of waste in the system.

Client-First Approach in Module Design

TDD can help achieve minimalistic and simple APIs. By writing the tests first the developer is forced to think about what the calling code will look like. This detects awkwardness in APIs early in the process when the API has yet to be built! In a linear approach, this viewpoint is missing until the developer writes their first test, usually after plenty of code has been written, making it difficult to refactor.

This approach also reduces the complexity in our software. In Philosophy of Software Design, John Ousterhout states:

> The best modules are those that provide powerful functionality yet have simple interfaces...module depth is a way of thinking about cost versus benefit. The benefit provided by a module is its functionality. The cost of a module (in terms of system complexity) is its interface. A module’s interface represents the complexity that the module imposes on the rest of the system: the smaller and simpler the interface, the less complexity that it introduces

Complexity can be reduced by designing smaller interfaces, and TDD provides an early check against complex interfaces. Consider writing a test such as the below before any code was written:

InvoiceProcessor processor = new InvoiceProcessor();

assert(processor.hasEmailOnFile(customer), true);

assert(processor.chargeForLatestInvoice(customer), "SUCCESS");

This is an early signal that the InvoiceProcessor class has too wide of a scope and that checking a customer's email is perhaps not its job. Since no code has yet been written, it is easy to avert this path and change it to something more suitable. By merely thinking about the API's use, we are forcing ourselves to think about simpler interfaces and module depth. Continuously paying attention to this as part of the Red-Green-Refactor cycle, we significantly reduce the chances of developing large complex interfaces.

Tie-In to Continuous Delivery

A core idea of Continuous Delivery is to ensure the code is always in a deployable state.

Continuous Delivery's goal is to make deployments—whether of a large-scale distributed system, a complex production environment, an embedded system, or an app—predictable, routine affairs that can be performed on demand.

We achieve all this by ensuring our code is always in a deployable state, even in the face of teams of thousands of developers making changes on a daily basis.

Practices like trunk-based development and feature flags serve this goal, with TDD being a natural fit. Practicing TDD guarantees that a developer is always a couple undos away from having all the tests passing, which is the primary requirement before anything can be deployed anywhere.

Working in small iterations also reduces merging risk considerably. If a Red-Green-Refactor-Commit cycle is followed, then the mainline always has the latest (tested) code. The concept of "dev complete" is replaced by having the code in a deployable state at all times.

Criticism of TDD

Small cycles generate waste

The main criticism of TDD is that the Red-Green-Refactor cycle can be too small resulting in overhead. This is best illustrated with an example.

If the requirement is to implement a method which filters out products under a certain price, it is fairly simple:

const filterProducts = (products, price) => {

return products.filter(p => p.price < price)

}

If we follow TDD strictly, we'd have to write at least a few failing tests to get to this point. The "Red" part of the cycle would be because of:

filterProductsdoesn't existCase for === price fails

Case for < price

Case for > price

Going back-and-forth between test and production code so many times could be seen as waste. In these cases I find it perfectly acceptable to take bigger steps in the Red-Green-Refactor cycle. For example, writing all four tests before I write the fairly simple method.

Focus on passing tests, not functional correctness

As critiqued here, TDD does tend to focus the programmer on making the next test pass, which carries a risk of losing the bigger picture of the module being built. A programmer could spend 20 minutes making a method pass and only realize later that this wasn't the method that was needed.

This criticism can apply to any style of development, TDD or not. Not having a larger feedback loop can result in focusing on the wrong things. In TDD's case, a focus on making tests pass may result in the wrong tests passing.

The way to avoid this trap is to write the test cases from top-down in the architectural layers. For example, when developing a microservice, the order the tests would be written will be:

Test for controller

Test for service layer

Test for data access layer

This way we get the big picture by focusing on how the consumer will call the API (i.e., the controller) and work our way down to the nitty gritty. If the opposite is done, it carries the aforementioned risk.

Expected specifications, got low-level tests

Following TDD will result in tests that cover every aspect of the software, and often the test suite becomes large. If the goal of the team is to document product specifications in TDD, then one can lose the forest for the trees. How should we figure out what this API does if it has 65 tests against it?

In contrast, if a linear approach of design-code-test is followed, you get to pick which tests to write in the end and you can select the ones which reflect the specifications. This has its own risks but will result in a more minimal set of tests which reflects what the API does without burdening the reader with edge cases. Is this trade-off worth it? I don't think so, but some might.

---

The learning curve of test driven development can sound steep but isn't. Anyone new to this approach should try two different exercises in the following order:

1. Do the String Calculator Kata

2. Do the Bowling Game Kata

Performing both these will provide enough practice and make tangible the conceptual ideas discussed.